- About▼

- Exo 101▼

- News

- Research▼

- Jobs + Internships▼

- Current Opportunities

- B.Sc. Summer Internships

- Graduate Studies

- Lumbroso Grant for Ambassadors

- Jean-Marc Lauzon Grants

- Postdoctoral Fellowships

- Maunakea Graduate School

- Secondary School + Cegep Internships

- Astrophysics Discovery Moments (Moments découverte en astrophysique)

- The Eclipse Ambassadors Training Program

- Public Outreach▼

- Our Events

- Astronomy on Tap

- Les Grandes conférences de l’iREx

- AstroMIL: an astronomy celebration for all!

- Podcast – Les astrophysiciennes

- ExoBites Video Series

- La petite école de l’espace

- Cosmic Club

- Exoplanets in the Classroom

- Beyond the Stars: Exploring Space, Rooted in Place

- Eclipse

- Invite an astronomer

- iREx in the Media (in our Reports)

- Social Media

- Newsletter

- Our Team▼

- Contact Us

- FR▼

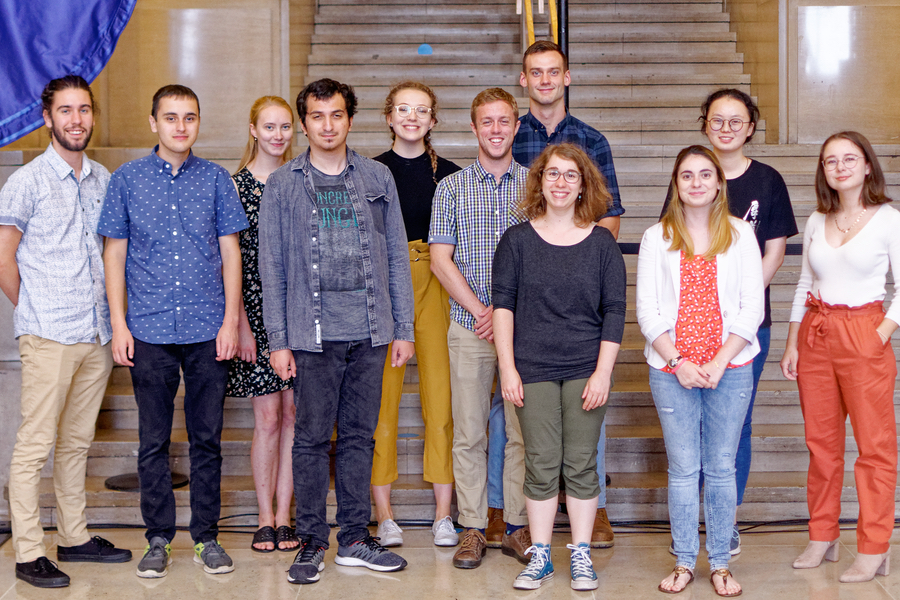

Simon Delisle

Simon Delisle Ariane Deslières

Ariane Deslières Danielle Dineen

Danielle Dineen Antoine Herrmann

Antoine Herrmann Tareq Jaouni

Tareq Jaouni Émilie Laflèche

Émilie Laflèche Laurence Marcotte

Laurence Marcotte Mathilde Papillon

Mathilde Papillon Pierre-Alexis Roy

Pierre-Alexis Roy Thomas Vandal

Thomas Vandal Lan Xi Zhu

Lan Xi Zhu

You must be logged in to post a comment.